Humantic AI is termed many things — an AI that can actually predict personality without a test, a revolution in understanding human behavior, a technology that could reshape the world. But how exactly does it do it with a high degree of accuracy?

In this post, we answer this vexing question. And more.

Before we do that, it’s important to understand that we are not a ‘fundamental research’ company. We are an ‘applied research’ company. We apply what has been researched over the years. And proven.

Amarpreet Kalkat, CEO, Humantic AI

Our technology has Machine Learning & AI on one hand, Computational Psychometrics and Psycholinguistics in the middle and Social/IO Psychology on the other hand. When you combine all these pieces, you create a very powerful cross-domain applied research system.

As you notice, our AI relies on not one, but two primary approaches — psycholinguistics and computational psychometrics. It is also why it has high levels of accuracy and fault-tolerance compared to its peers, but more on that later.

Psycholinguistics

Wikipedia defines psycholinguistics as the science of studying interrelations between language and psychological aspects, aka personality. Unknown to most, this field (even in its current avatar) has been around for 25+ years, with 00s of research papers published so far with 000s of citations.

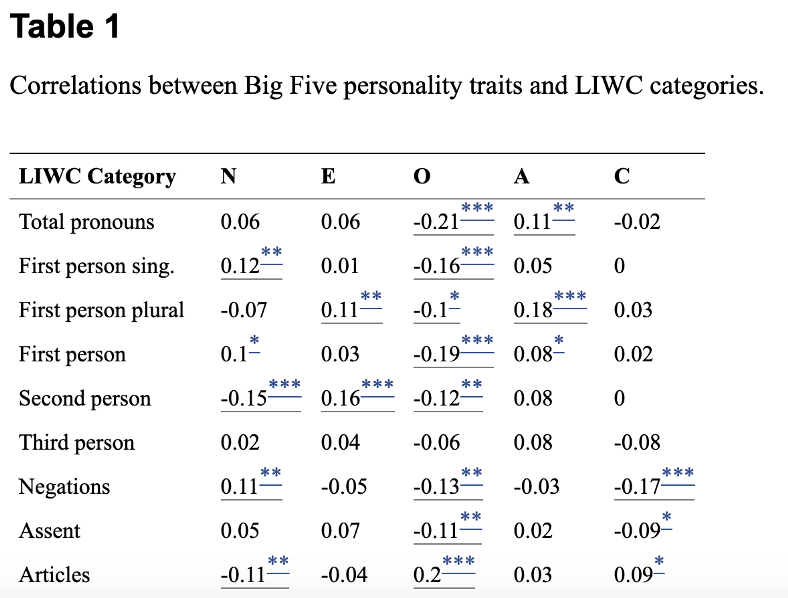

Here is a snippet from one such research paper, which shows how linguistics could be used to reliably predict personality.

The work done by Dr. James Pennebaker of UT Austin with Linguistic Inquiry and Word Count (LIWC) is regarded as seminal in kicking off a computer based approach to psycholinguistics.

A simple Google search would list 00s of other research papers validating the efficacy of this approach. IBM Watson takes the same linguistics based approach inits personality prediction algorithms and lists 80 research papers in its reference list.

However, the purely linguistics based approach suffers from a couple of key challenges (detailed later in this article). The solution to these challenges is to go beyond linguistics as Humantic AI does, and that is where computational psychometrics comes in.

Computational Psychometrics

Wikipedia defines computational psychometrics as the fusion of traditional psychometrics, cognitive sciences, and AI models applied to large-scale data. Instead of relying on language, it relies on other signals — activity patterns, profile information, metadata, and more. For example, our models show a clear correlation between agreeableness and the no. of recommendations given by someone on Linkedin. Of course, it is one amongst hundreds of factors being analyzed by the AI and ultimately plays a minuscule role by itself.

The work done by Dr. Michal Kosinski of Stanford University, Dr. David Stillwell of Cambridge University et. all. is seminal in this area. Dr. Kosinski’s website lists a no. of research papers published by him and his peers in this area. And while this field dates back only 10+ years or so, the science and accuracy of the approach is widely accepted as proven by top researchers in the field.

Validation & Accuracy

A combination of the two approaches listed above makes Humantic AI highly accurate, outscoring every other player by a significant measure.

In an empirical study undertaken by I/O Psychologist Dr. Tom Janz, the observed behavior of 120 individuals was compared against their Humantic AI predicted behavior. The average correlation across 16 different factors measured by Humantic AI is 0.71, which vastly outscores the published coorrelation by IBM Watson at 0.35. Humantic AI can predict personality with 85%+ accuracy, which is not only better than what a human could, it is also better or as good as the accuracy of best-in-class psychometric tests (like Hogan).

Challenges & Concerns

A technology that lies at the intersection of multiple fields understandably needs to address multiple challenges, which Humantic AI addresses unequivocally.

Challenges with Psycholinguistics

The challenge with a purely linguistics based approach is that a large volume of written text is required to produce a result with high accuracy. More than 2000 words would be required for high accuracy, and the results keep getting more accurate till upto 3000 words. On the lower side, IBM Watson produces a warning below 600 words and Humantic AI refuses to predict results below 300 words (it provides a confidence interval starting at 40% for 300 words).

The other challenge with purely linguistics based approach (that is adopted by most players in this space) is that the text could have been authored by someone else (on a professionally written Linkedin profile for example), or written in a context with low relevance (an article criticizing a policy for example).

Computational psychometrics addresses both these challenges.

Challenges with Computational Psychometrics

While the availability of enough data is a common challenge across all Machine Learning/AI-based approaches, the specific challenge is in the area of privacy, as most of the work has been done with social data. In fact, most of the research in the field focuses on highlighting what is possible to predict from social data and the risks to individual privacy that should be borne in mind.

Humantic AI addresses this challenge in the most unequivocal way possible — by simply not using any social data in the first place. Users cannot input any Facebook, Whatsapp, chat data into Humantic AI. Users can only input data that is already available to them, namely resume, Linkedin profile or simple text. We term this approach data recycling. It ensures that the algorithm is never using data that the user is not aware of — putting the user always in 100% control of the AI.

Algorithm Gaming

While efforts to game algorithms is a scenario that has existed forever and will persist in the future, it is like a game of cat and mouse that is mostly won by the algorithms. The most famous example of it is Google Search and the whole dark SEO industry, and we all still trust Google because we know that it knows how to handle those challenges.

Nevertheless, gaming of personality AI is still mostly a theoretical construct. More importantly, it would be extremely hard to do even later — imagine a normal person reading the graph shared above and then trying to use fewer pronouns in their language for years so as to appear more ‘open’ and managing to change it by <1% as it is 1 out of 00s of factors being relied upon by the AI.

Ghostwriting

A small no. of profiles on Linkedin tend to be ghostwritten. While this has minimal impact on overall accuracy, Humantic AI undertakes multiple measures to address this issue — certain kind of linguistic data that is unlikely to be ghostwritten (like recommendations, post comments etc.) gets higher value; relevance of each snippet is determined (a role description with details of the company instead of the role is omitted); and ultimately, the role of linguistics in the AI is capped at <50% anyway.

Less Data

The most valid concern is that of questionable accuracy when there just isn’t enough data available. Humantic AI addresses this in a manner that leaves no room for doubt— it doesn’t provide results till it can be at least 40% confident in its ability. Above 40%, it gives out a ‘confidence score’ with every result based on the amount of data available. Based on the exact requirements of their use-case, users can choose to provide additional input data if the confidence score is below a certain %, or skip using the results completely if additional input data is not available.

As the world becomes more virtual and our interactions with software and AI increase, it becomes vital to ensure that we don’t become mechanized — and all interactions are humanized as much as possible. From the largest F50 organizations to pre-launch startups, Humantic AI makes it possible.

Amarpreet Kalkat, CEO, Humantic AI